How My Startup Survived a DDoS Attack

Contents

Ahhh getting DDoS'd. You hear about it but you never think it will happen to you… until it does.

Getting Ready for Bed

DDoS attacks would be much more pleasant if they happened at the start of working hours so that you can mitigate the attack with a fresh mind.

Unfortunately, ours started just as I was going to bed.

At midnight on November 22nd we started receiving support tickets and social media mentions that our app was down. This felt strange as our app is hosted on a pretty beefy set of servers and has been ticking along happily for years, so why now?

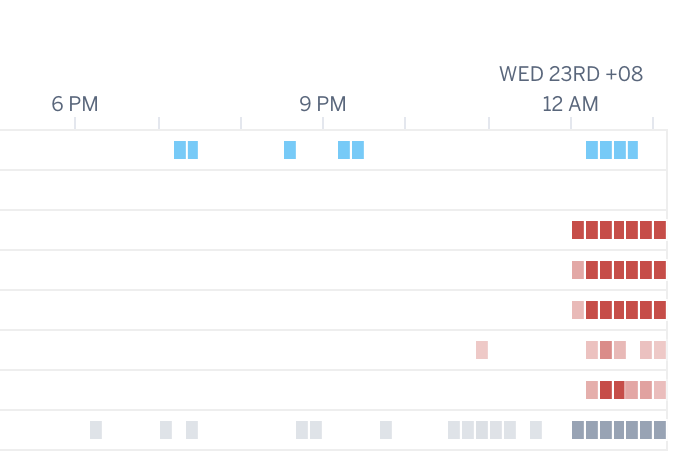

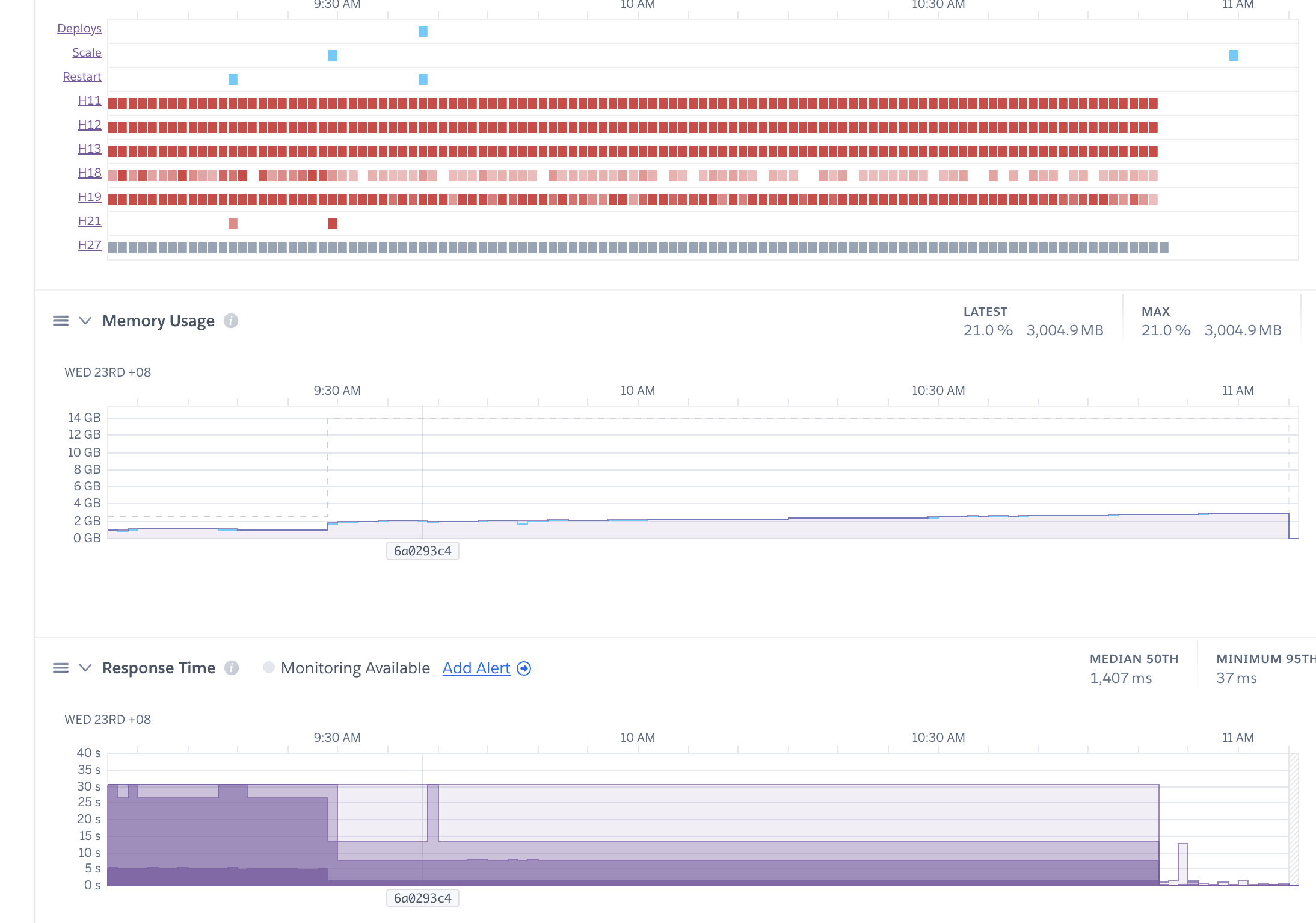

I logged into the Heroku dashboard to take a look:

That's more red dots than I've ever seen on this chart before. And the website was indeed down.

That's not good.

Ruling Out Simple Explanations

As the adrenaline starting to pump wildly, I applied occam's razor.

What's the simplest explanation for this? Looking at the chart, the downtime started bang on midnight.

Did some process break? Is this some Y2K-like date related bug? Did Heroku roll something back because I remember seeing something about them deleting servers on November something-something?

I ruled out these explanations within a few minutes and started to suspect I was under attack.

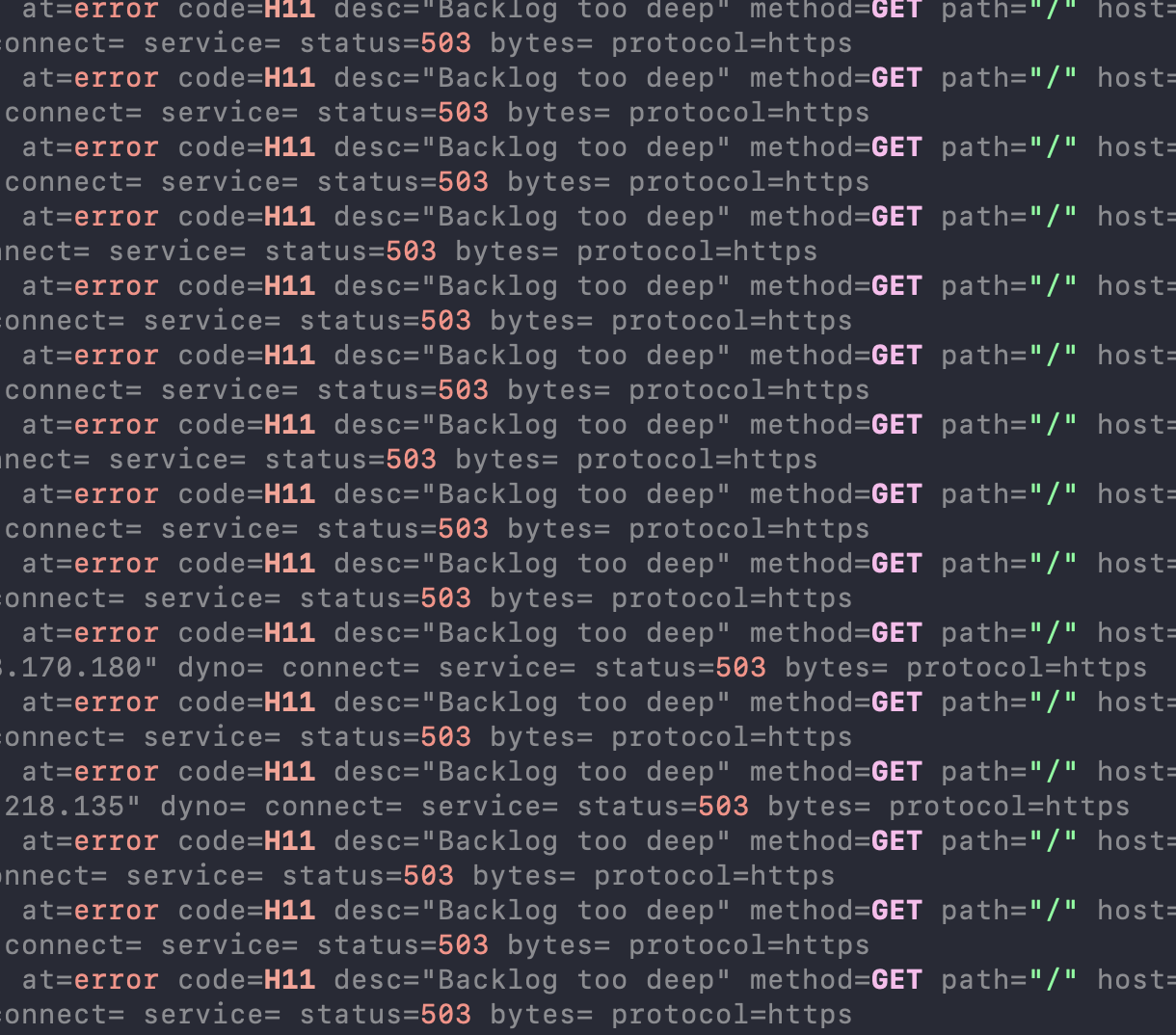

I checked the server logs using –tail to get a stream and boom, just a never-ending flood of erroring GET requests to "/" projectile vomiting out into my terminal.

I guess sleep is canceled for tonight.

Ransom Email

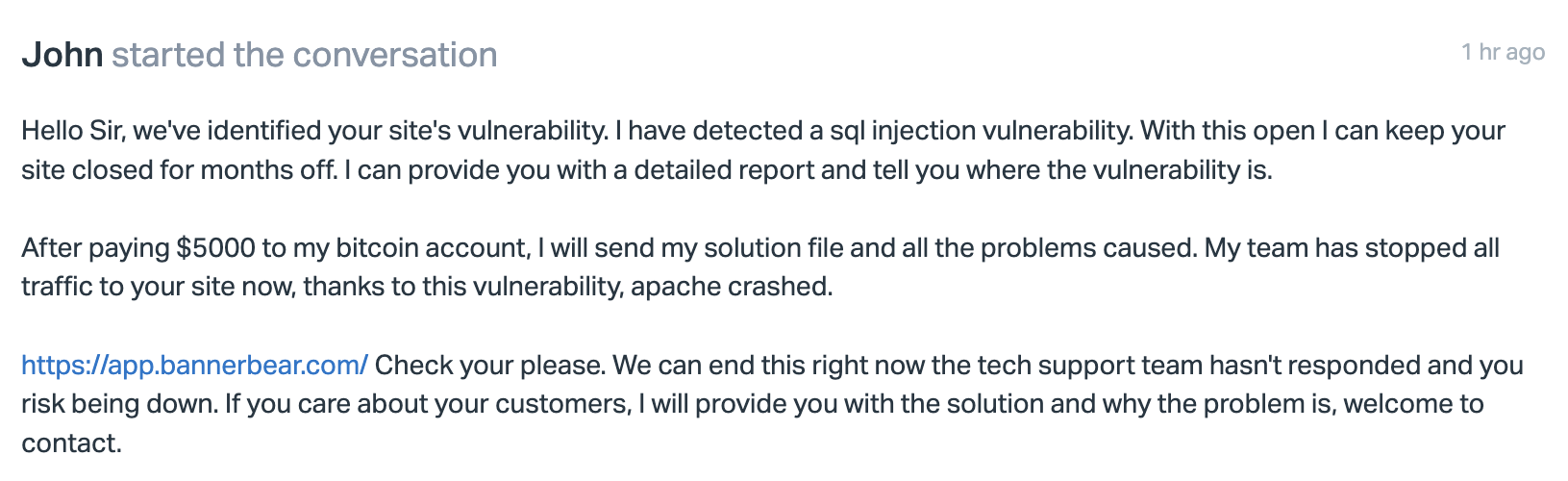

Turns out there was a ransom email in my inbox.

With a bit of misdirection saying it's an SQL injection attack. It's not, it's just an old fashioned hammering.

I assume this is in there maybe to confound / exhaust security teams as they pore over code looking for the attack vector, ultimately forcing the company to give into the ransom.

From discussing with people on Twitter and in some tech chats I participate in, other folks have received the same email and were also DDoS'd.

So that's it then, this is an attack.

Wrangling Rack Attack

Bannerbear is built with Ruby on Rails and we use a gem called Rack Attack to apply rate limiting. My first thought, why wasn't this doing its job?

I thought maybe I had configured it wrong - shoutout to Nate Berkopec who helped me apply some patches to my existing Rack Attack config.

But this didn't bring the site back up.

The brutal reality dawned on us, that the magnitude of the attack meant that it was already overwhelming the middleware where Rack Attack sits. The blocking or rate limiting needed to happen earlier than this.

Migrating to Cloudflare

This is a job for Cloudflare and if I'm honest with myself I should have migrated ages ago rather than having to it under pressure now.

Luckily, it's a fairly simple process. Cloudflare imports your DNS settings, you switch nameservers, and that's pretty much it.

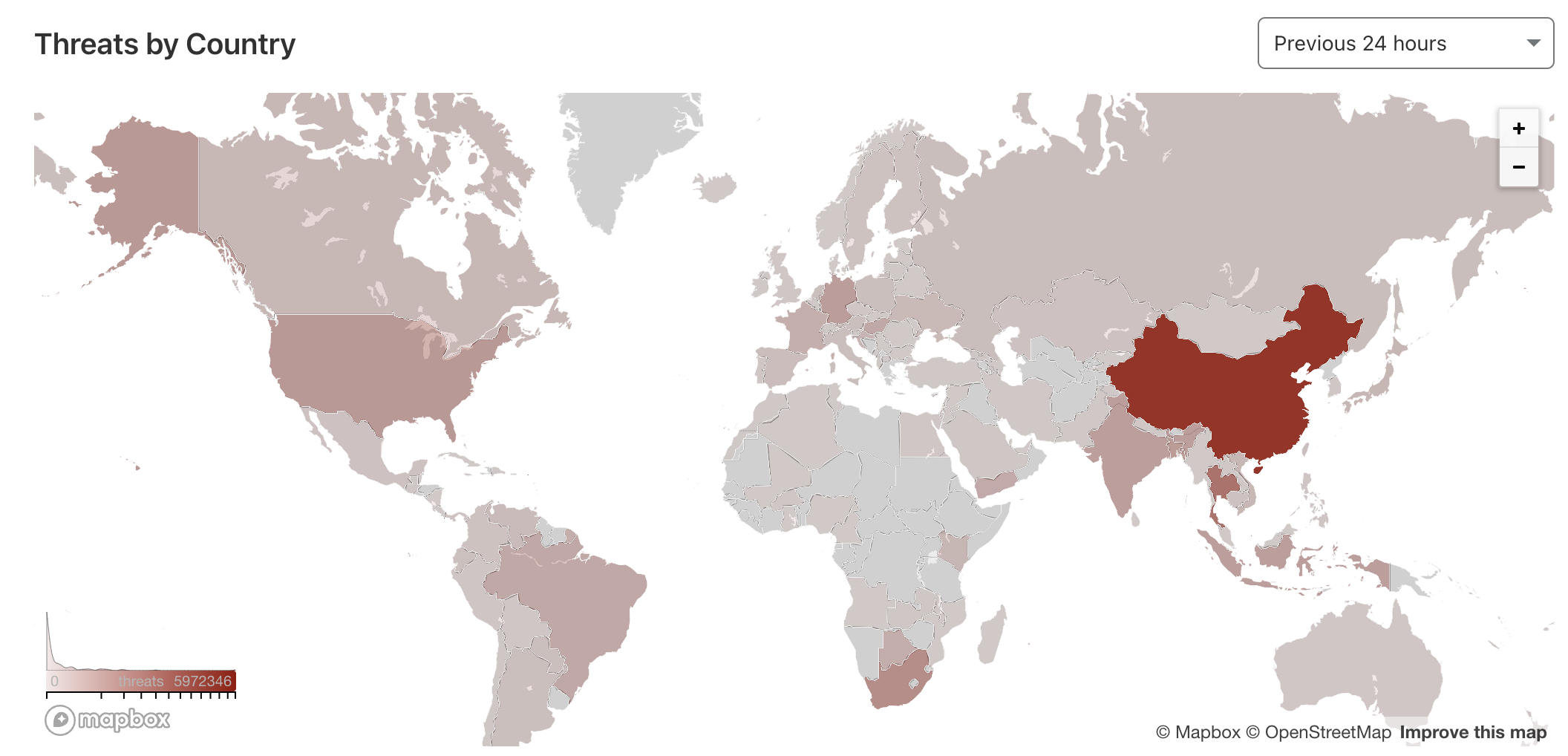

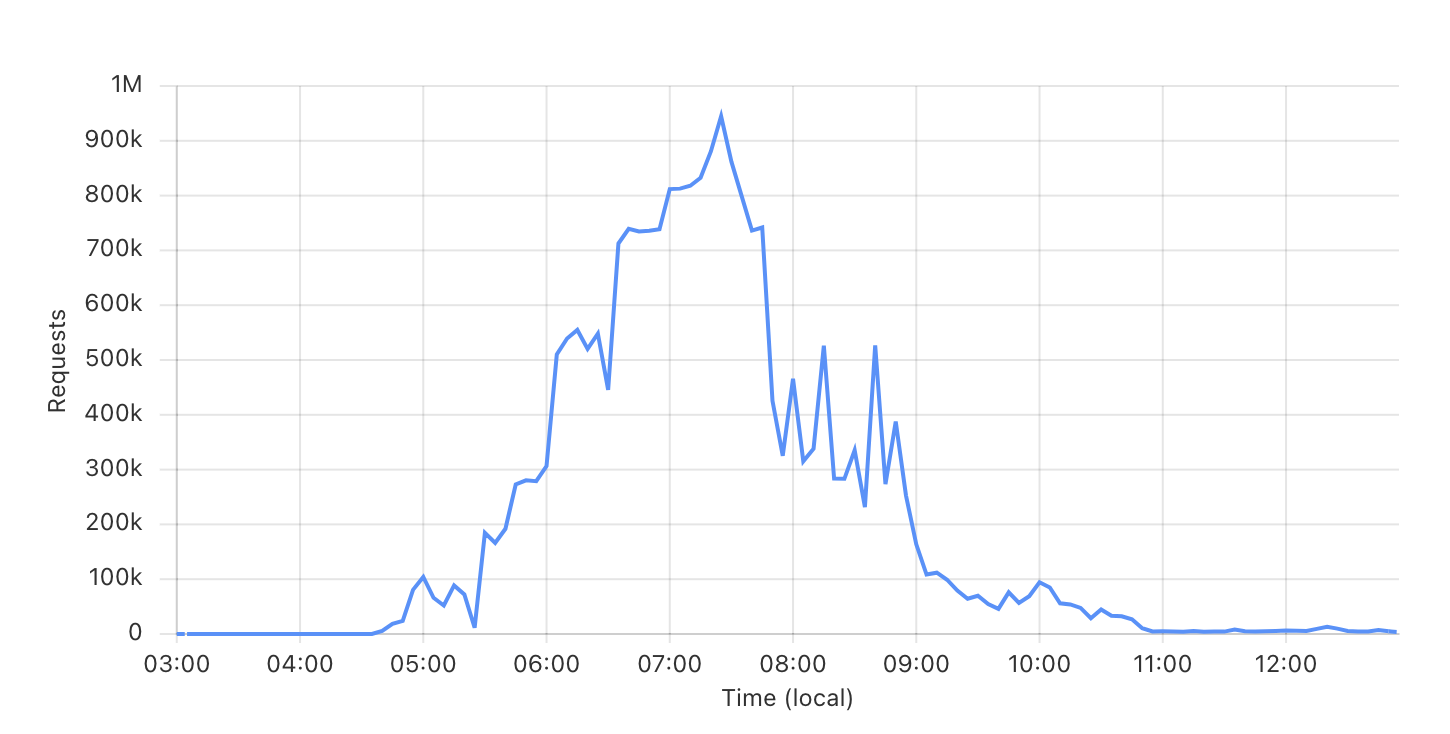

Now I could finally see how much traffic was actually hitting us.

The chart ramps up as our new DNS propagates and Cloudflare begins to bare the brunt of the attack, then down as I apply firewall settings as per below.

The chart peaks at about 1 million hits per 5 min period. We are now at T+5 hours, so extrapolated out, that's a big chunk of traffic. I'm assuming something like 12 million requests per hour, for 5+ hours.

Tweaking Firewall Settings

The flood of traffic was still coming - hang on I thought Cloudflare is supposed to magically give my site superpowers?

Important Cloudflare gives you the tools to deal with a DDoS, and since every site is different (and also the type of attack, I guess) you need to set up firewall rules to mitigate the attack.

Huge shoutout to Tom Moor who very helpfully jumped on a Zoom call with me and explained how to set up some firewall rules to block the traffic.

YMMV but for us the majority of the DDoS traffic was coming from China, so we started with an outright block of all China-originated traffic.

There's also a "Managed Challenge" set on the "/" path meaning you may see a challenge screen if Cloudflare suspects you to be a risk, and will block accordingly.

Glorious Silence

With some simple firewall rules in place, traffic levels faded down to normal, and the site came back up.

Note the beautiful silence on the right hand side of this chart.

Conclusion

This attacker seems to be targeting known indiehacker projects. I can confirm at least half a dozen other folks I know who have been targeted by the same attacker.

I would recommend migrating your DNS to Cloudflare as soon as possible so that you can switch on these tools easily, if / when needed.

One added benefit is we now have a really nice GUI that lets us rate-limit our API with much more granularity than before. That alone makes it worth migrating to Cloudflare, especially if you have a public API.